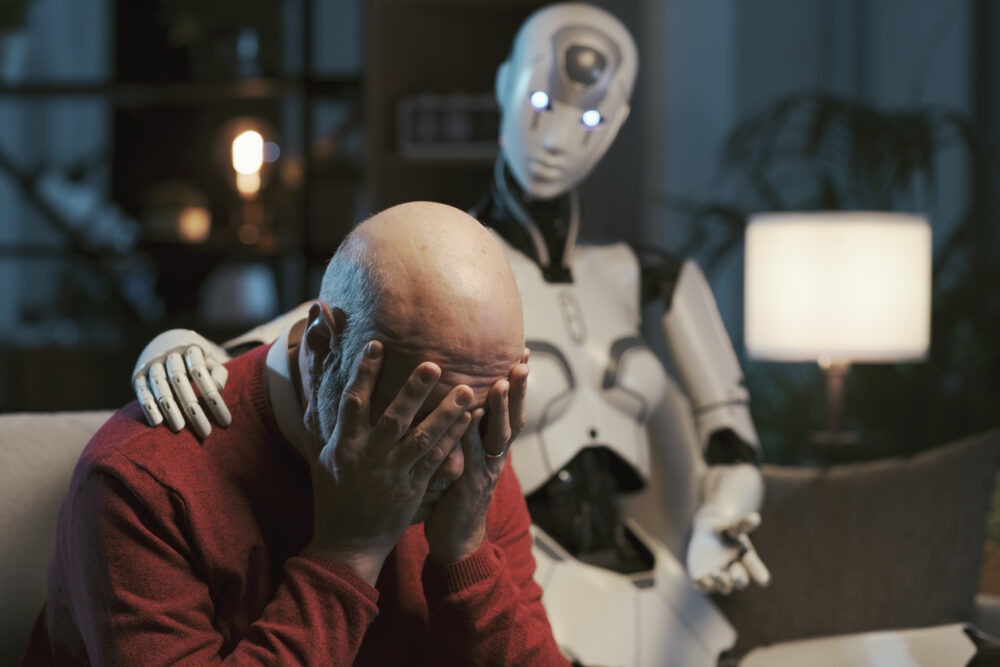

The AI therapist is moving into everyday life quickly. Many people call this progress in mental health technology because it offers speed, lower cost, and constant access. Yet this shift often points to a deeper gap. People adopt AI therapy because many systems around them no longer provide reliable support when emotional strain rises.

How WhatsApp and Hybrid Work Created the 24 Hour Office

Why the AI therapist feels safer than human support

People do not choose AI mental health tools because they love machines more than humans. They choose them because they cannot reach human help easily, or they cannot afford it, or they do not trust the environment around them. Many workplaces still reward performance more than openness. Many healthcare systems still struggle with capacity. Burnout conversations happen, but leaders rarely redesign work to reduce the pressure that causes burnout.

An AI therapist offers a controlled space. It does not judge. It does not gossip. It does not create workplace consequences. For someone in a high pressure role, that predictability can feel safer than a human conversation.

AI therapy and emotional self management

AI therapy can support reflection, but it can also normalize emotional self management in isolation. When people process distress only through software, the signal stops with the individual. Leaders do not hear it. Systems do not respond to it. Teams do not adjust workloads or expectations because the pain never reaches them.

That shift changes the story people tell themselves. They treat stress as a personal regulation task. They treat burnout as a habit problem. They stop naming the structural drivers that create strain in the first place. For a clear summary of safety and ethical concerns, read the Stanford HAI analysis on the dangers of AI in mental health care.

Burnout Today Looks Like People Living on Autopilot

AI therapist tools and the risk of dependency

As the AI therapist becomes more common, many products optimize for engagement. They aim to keep users returning. They measure usage more than long term resolution. This design can encourage reliance, especially when users feel ongoing pressure and lack real support around them.

An AI therapist cannot challenge a toxic workflow. It cannot change management behavior. It cannot lower unrealistic targets. It can only respond inside the boundaries of a conversation. When people rely on it as the primary support channel, they may feel temporary relief while the cause of the distress stays in place.

What the AI therapist trend reveals about work culture

The rise of the AI therapist reflects how modern work treats stress. Many organizations push resilience as a personal skill while they keep the same structures that generate overload. Employees then carry the responsibility to self regulate, even as job security weakens and economic pressure rises.

In that environment, an AI therapist fits easily. It offers support without disruption. It helps people cope without forcing institutions to change. That convenience can hide the real problem.

A question worth asking about the AI therapist

The key question is not whether AI therapy can help someone feel better for a moment. The key question asks whether society accepts emotional survival as an individual responsibility supported by software. When technology absorbs what human systems refuse to hold, normalization becomes the risk. That normalization deserves attention.